Some my earliest and fondest memories centered around family dinners at my grandpa and grandma’s house. For Thanksgiving, for example, there was turkey, mashed potatoes, gravy, sweet potatoes, green beans, olives, rolls, salad and several pies for dessert. But beyond the vast array of food, it was fun to see my grandparents, parents, three aunts and three uncles, and various numbers of cousins. On a few occasions, my second cousin George appeared and early on my Aunt Mary and Aunt Emma. All of these people were so different! We had more fun because we were all there together.

You have heard “The Whole is Greater than the Sum of its Parts” before, no doubt, but I think this is what it means when applied to a family setting. All families argue (although ours never did in these larger Holiday settings. And, almost all families love. But a fundamental question is this: do the people in the family tend to “thrive” more than they would on their own. If the family is functional, this should be the case. They balance each other; they support each other; they help each other improve. They cooperate when it counts. You will not always agree on everything. Far from it. You might be a slob like Oscar while your sibling might be very Felix-like. And, you’re both “right” under different circumstances and for different tastes.

Many sports teams will have a variety of people who excel more in running, or in blocking, or in throwing, or scoring. In baseball, for instance, or American Football, there are very different people in different roles, both physically and temperamentally. An offensive lineman in football will typically be stronger and bigger than a quarterback. Moreover, if the lineman gets “angry”, they might be able to block better on the next play. By contrast, the quarterback must remain calm, cool, and confident under pressure. He must try to put away any fear or anger or depression he feels on the way to the huddle before he gets there and certainly before the snap. When teams are working well together, they don’t criticize each other for differences and they work together to win the game rather than wasting time pointing fingers or trying to assign blame. In a baseball or football team, there is no question that the individual does better because of his teammates. Working together they can solve problems, win trophies, and have more fun than they could individually.

You right eye sees the world a little differently from your left eye. Thank goodness! Your brain normally puts these two someone different flat, 2-D pictures into a 3-D picture! Your brain does not argue as to which one of these views is “correct.” It certainly does not instigate religious wars over it. I say that the brain “normally” does this. However, if a person is born and their eyes do not move or align smoothly, or if one eye is extremely near-sighted, it can happen that the brain “chooses” one eye to pay attention to. In this case, it seems the two images are so discrepant that the brain “gives up” trying to integrate them and instead chooses one image to use. In a condition such as “amblyopia” the brain mainly relies on the input from one eye. This condition is a distinct disadvantage in many sports.

In boxing, for example, it is literally a show-stopper. A fighter might look like hamburger, but the fight goes on. If, however, there is a cut above his or her eye so that blood drips down to obscure vision in one eye, the fight is stopped. That fighter can no longer see in depth (as well as losing some peripheral vision). It is no longer deemed a “fair” fight. Anyway, it seems the human brain does have some limits as to how much two discrepant views can be reconciled, at least when it comes to vision. Is there a limit to how much a family may disagree productively and still be functional? This is a good question, but one to return to later. Instead, let’s first turn to what are called “dysfunctional families.”

We said in a functional family or team, people are better off than they would be doing something on their own. On the other hand, consider a dysfunctional family. Here, people get mostly grief, judgement, criticism, competition, and lies. Why does this happen? Often dysfunctional behaviors are handed down from generation to generation through social learning, among other things. If too many dysfunctional behaviors are in one family, this causes a “vicious circle” that makes things worse and worse. For example, imagine a family is basically healthy but they do not engage in “alternatives thinking.” They see a situation, come up with an idea, and unless there is imminent danger, execute the idea as soon as possible. They will end up in a lot of trouble with that strategy. However, if they don’t engage in blame-finding, but instead they engage in collective improvement, they will learn over time to make fewer and fewer mistakes. People will all benefit from being in the family. But if a family instead fails to consider multiple alternatives before committing to a course of action and has a cycle of blaming each other without ever improving, then it will probably be dysfunctional. People will give more and get less in return than if they have been working alone. That does not mean there are zero benefits within a dysfunctional family. They may still cover for each other, help each other, provide emotional support, etc. But the costs outweigh the benefits in the long run.

People who come from functional families tend to see the world in a very different way as compared with people who come from dysfunctional families. Obviously, there are all sorts of exceptions as well as other factors at play, but other things being equal, these families of origin color our perceptions of daily life and predispose us to certain actions. Depending on the circumstances, it is even true that some of what we think of as “dysfunction” could actually be “function” instead. Suppose, for instance, you and two siblings suddenly found yourself attacked by a bear. It may be the best thing imaginable to take the first action you think of without trying to over-analyze the situation. Or not. It may well depend on the bear. And, therein lies the rub.

Our own personal experiences are always a teeny sliver of all possible situations. So, your experience with a bear, bee, or bank may be quite different from mine. As a consequence, we may have different ideas about what constitutes function or dysfunction. In terms of the argument I am about to make, it doesn’t really matter which is “better” or “worse.” All that matters is that we agree some families provide a healthier environment than others. And attitudes are not all that are handed down; so are “ways to do things.”

Perhaps the arbitrary nature of what we consider “intelligent” wisdom handed down in families is best illustrated by a story about making a Holiday Ham. In the kitchen, a 10 year old boy asks: “How come you’re slicing off the ends of the ham?”

His mom answers, “Oh, that’s the way your grandpa always did it.”

Son: “So, why did he do it?”

Mom: “Oh, well. Uh. I don’t really know. Let’s go ask him.”

Son: “Hey, Grandpa, how come you cut the ends of the ham off?”

Grandpa: “Well, sonny. It’s because….it’s because…let’s see. That’s way my mom always did it.”

As it turns out, the 90 year old great-grandma was at the feast as well. Though she was a bit hard of hearing, they eventually got her to understand the question and thus she answered, “Oh, I always used to cut off the ends because I only had one small pan and it wouldn’t fit. No reason for you all to do it now.”

And there you have it in a nutshell. We are all walking around with thousands if not millions of little bits of “folk wisdom” we learned through our family interactions. In most cases, we’re not even aware of them. In virtually no case did we ask about where this folk wisdom came from. Have any of us actually tested one of these out in our own life to see whether it still holds up? And then what? Are you going to inform the others in the family that what everyone believes may not actually be true, at least in every case. Maybe. Most do not, in my experience. In addition, it seems that if you are from a “functional” family, you are much more likely to share this kind of experience (but they still don’t do it 100% of the time). People will often be interested in it and want to learn more. If you are from a more dysfunctional family, you might be more likely to realize they would put you down and try to shoot holes in your example. They might laugh at you. They might just not talk to you. So, what do you do?

We can extend these ideas to much broader notions such as a clan, a team, a business, a nation. For people who were not lucky enough to grow up in a functional family, the notions of trust and cooperation come hard. And, that’s a sad thing. Because your experience of what a bee or a bear or a bank will tend to be based on your own experience with very little reliance on the experiences of others. You are one person. There are 7 billion on the planet. So, yes, you can rely on your own experience and dismiss everyone else’s. Good luck.

Even a functional family may draw the boundaries around itself so tightly and firmly that anyone “inside” the circle of trust is trusted but anyone outside is fair game to take unfair advantage of. At the same time, such a family regards anyone outside as a threat who must “obviously” be out to get their family. People from this type of family do know cooperation and trust, but find it nearly impossible to extend the concept across boundaries of family, culture, or nation. They are happy to hear about their brother’s experiences with bees but they are not much interested in the experiences of their cousins from half way around the world.

Everyone must decide for themselves how much to rely on their own experiences and how much to rely on close relatives, authority figures, ancient teachings, or the vast collective experience of humanity. Of course, it doesn’t have to be an either/or thing. You might “weight” different experiences differently. And, that weighting may reasonably be quite different for different types of situations and strangers. For instance, if your cousin is a smoother talker, vastly handsome, and twenty years younger, you might not put much stock in his or her advice about how to “hook up.” You might instead put more credence in someone at work who is in a similar situation. You might put very little stock in the experiences from a culture that relies on arranged marriages. Surprisingly, exactly because they are from a very different situation and therefore a quite different take on matters, they may give you very new and creative ways to approach your situation. For example, you might find that if you “pretend” you are already “pledged” to a partner your parents chose, dating might be less anxiety provoking and more fun. You might actually be more successful. I’m not saying this specific strategy would work or that ideas from other cultures are always better than ones from your own culture. I am just saying that they need not be dismissed out of hand, not because it’s “politically correct” but because it is in your own selfish interest.

I’ve already mentioned in previous blogs that people are highly related and inter-connected via genetics, their environmental interchanges, their informational interchanges and through the emotional tone of their interactions. Because people are highly interconnected, you can find much wisdom in the experiences of others. But there is another, largely underused aspect of this vast inter-relatedness. I call it familial gradient cognition. Or, if you like, “Mom’s somewhat like me.”

To understand this concept and why it is important, let’s first take a medical example. However, this potential type of thinking is not limited to medical problems. It basically applies to everything. So, you have a pain in your right hip. What is the cause and how do you fix it? That’s your question for the doctor, or more likely, nurse practitioner. They will typically ask questions about your activity, diet, what you’ve done lately, when the pain comes and goes etc. They may run various tests and decide you have sciatica. This in turn leads to a number of possible treatments. When I had sciatica, I got referred to a sports medicine doctor and got acupuncture. It worked. (Later, I discovered an even better treatment — the books of John Sarno). Anyway, we would call this a success and it seems like a reasonable process. But is it?

The medical professional’s knowledge is based on watching other experts, book learning, their own experience etc. And so they basically engage in this multiplication of experience. The modern doctor’s observations are based on literally many millions of cases; far more than he or she could possibly observe first hand. But what potentially useful information was completely omitted from the process described above? Hint: blogpost title.

Yes, exactly. Throughout this whole process, no-one asked me whether anyone in my family; e.g., my mom, dad, or brother had had these symptoms. No one asked whether they had had any kind treatment, and if so, what had worked and not worked for them. Now, my brother, mom and dad are especially closely related but so are my four children and my grandparents, aunts, uncles, nieces, nephews and grandchildren. And, in the most usual cases, it isn’t merely that we share even slightly more genes than all of humanity. We are also likely to share diet, routines, climate, history and family stories and values. These too can play a part in promoting health. For example, did people in your family believe in “toughing it out” or were they more of a hypochondriac? The chances are, you will tend to have similar attitudes.

In medicine, would it be better to make decisions based, not just on the data of the one individual under treatment, but on the entire tree with more weight given to the data for other individuals based on how closely related they were? Of course, family relations are only one way in which the data of some individuals will be more likely relevant to your case than will others. For instance, people in the same age cohort, people who live in the same area, people who are in similar professions or who work out the same number of hours a week that you do will be, other things being equal, of more relevance than their opposites.

Of course, as I’ve already mentioned, modern medicine does take into account the life experiences of many other people. But these other “people” are completely unknown. Studies are collectively based on a hodgepodge of people. Some studies use random sampling, but that is still going to be a random sample limited by geography, age, condition, etc. Other studies will use “stratified sampling” that will report on various groups differently. Some studies are meta-studies of other studies and so on. But how similar or dissimilar these people were to each other on a thousand or a million potentially relevant factors is more than 99% lost in the reporting of the data. But that doesn’t really matter because the doctor would typically not look at any article in response to your case because he or she will base their judgement on just you and the information they know “in general” which is based on a total mishmash of people.

Imagine instead that every person’s medical issues were known as well as how everyone was related to everyone else, not only genetically but historically, environmentally, etc. And now imagine that in doing diagnosis decisions as well as treatment options, the various trees of people who were “related” to you in these thousands of ways were weighted by how close they were on all these factors. Over time, the factors themselves could become weighted differently under different circumstances and symptoms, but for now, let’s just imagine they are treated equally. It seems clear that this would result in better decision making. Of course, one reason no-one does this today is that keeping track of all that data is mind boggling. Even if you had access to all the relevant data, we can’t layout and overlay all these relationships mentally to make a decision (at least not consciously).

However, a powerful computer program could do this. And, the result would almost certainly be better decisions. There are obvious and serious ethical concerns about such a system. In addition, the temptation for misuse might be overwhelming. Such a system, if it did exist, would have to be cleverly designed to avoid any one power from “taking it over” for its own ends. There would also have to be a way to use all these similarities and prevent the revelation of the identities of the individuals. All of that, however, is grist for another mill. Let’s return to the basic idea of the decision making by using multiple matrices of similarity to the existing case rather than relying on general rules based on what has been found to be true “of people.”

This may be essentially what the human brain already does. A small town doctor in the last century would see people on multiple occasions; see entire families; and would undoubtedly perceive patterns of similarity that were based on those specific circumstances. The Smith family would all come in with allergies when the cottonwood trees bloomed. And so on. But he or she only sees a limited number of cases even in his entire lifetime. Suppose instead, she or he could “see” millions of cases as well as their relationships to each other? Such a doctor might well be able to perform as well as the computer and much better than they would today.

Can it be better done by collecting huge families of data and having a computer do the decision making? Or can it be done better by giving access to human experts to much larger data bases of inter-related case studies? What are the potential societal and ethical implications and needed safeguards for each approach?

The medical domain is only one of thousands of domains that could do better decision making this way. For example, one could use a similar approach in diagnosing problems with automobiles, tires, students’ learning trigonometry functions, which fertilizers and watering schedules work best for which crops in which soils for what results? You might call this “whole body” decision making. It is a term also reminiscent of the phrase, “Put your whole body into it” (as when cracking a home run into the upper deck!).

It is also reminiscent of the following situation. When you accidentally burn your finger, it does not just affect your finger. You jump back with your whole body. There are longer last effects in your brain, your stress hormones, your blood pressure. And, various organs and cell types will be involved in healing the burn on your finger. Your body works as a whole. But it is not an undifferentiated whole. Your earlobe may not be much involved with healing your finger. It is tuned to have communication paths and supply chains where they are needed. It’s had four billion years to work this out.

Of course, the way the body interacts is largely, though not wholly, determined by architecture. Even if your body decided that your earlobe should be involved, there is no way for the body to do that. To some extent, it can modify the interactions but only within very predefined limits. On the other hand, the brain is much more flexible when it comes relating one thing to another. We can learn virtually any association., But, at least consciously, we are limited to the number of things and experiences we knowingly take into account while making a decision.

What people might say would lead you to believe that they very often base decisions on only one similar case. “Sciatica you say? Oh, yeah. My cousin Billy had that. Had an operation to remove a disk and the pain totally vanished. Of course, three months later it was back. In his …well… back in his back.” It could be the case that there is more sophisticated pattern matching going on than meets the eye. Sadly though, most laboratory experiments reveal that most of the time, under controlled conditions people seem to suffer from a number of reasoning flaws. I believe that the current crop of difficulties people have with reasoning is not inevitable. I think it’s because of cultural stories and with new cultural stories, we could do a better job of thinking. And, we might be able to further multiply our thinking ability by giving the right kind of high speed access to thousands or millions of similar cases along with presentations based on how various cases are related. Or, we could have the computer do it.

Indeed, speaking of “family stories” that are common in our culture, I actually think that we have a “hierarchy” of thinking based on a patriarchal family structure. We do experiments and report on a teeny and largely preset sliver of the reality that was the experiment. A person reads about this and remembers a teeny sliver of what was in the paper. When it comes to a specific case, the person may or may not consciously remember that sliver. This is the “rule based” approach and it is probably better than nothing. A more holistic experience-based approach is to allow the current case to “resonate” with a vast amount of experience. Of course, both methods can be deployed as well and perhaps there can even be a meaningful dialogue between them. But it may be worth considering taking a more “whole body” approach to complex decision making.

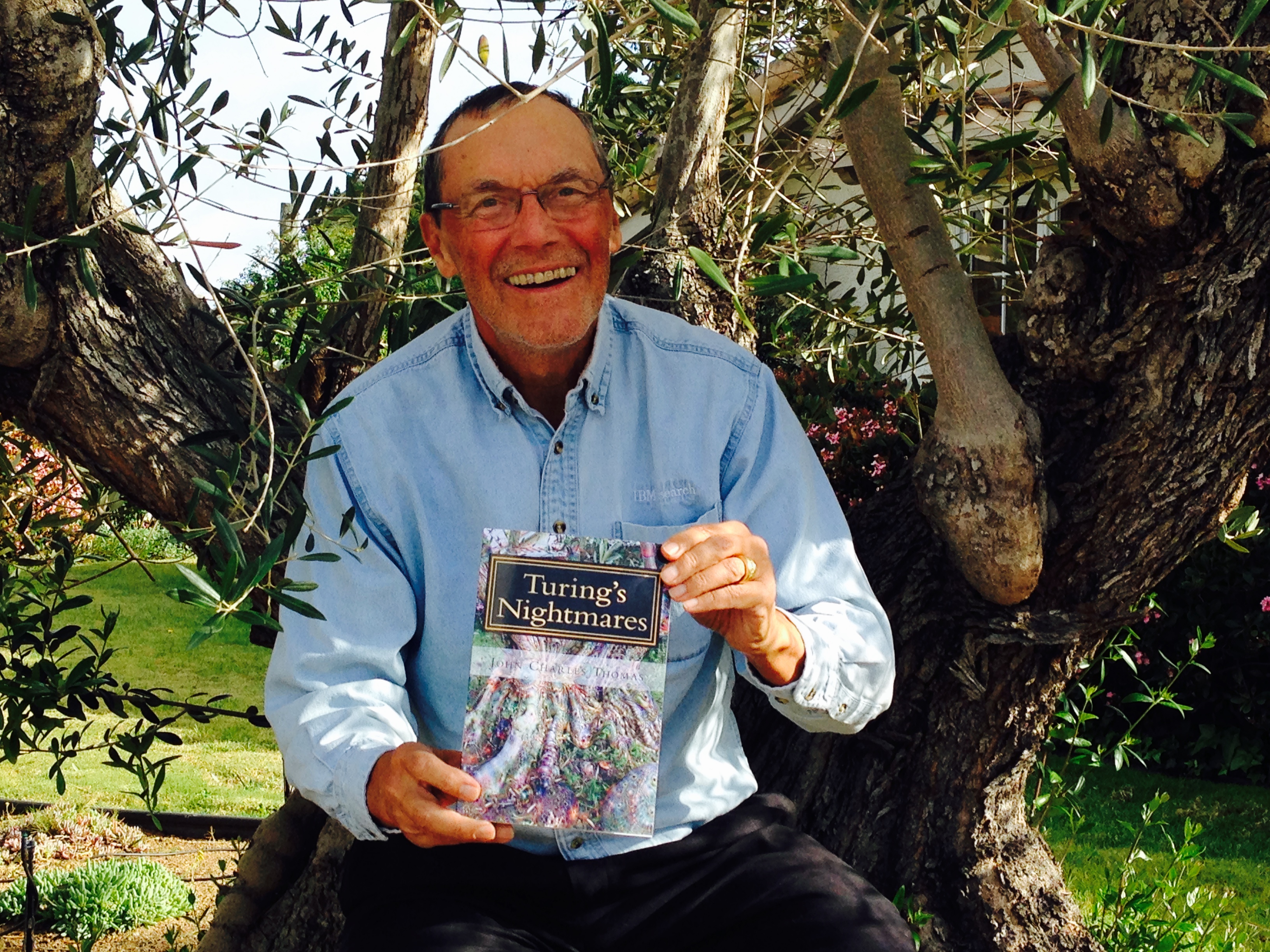

(The story above and many cousins like it are compiled now in a book available on Amazon: Tales from an American Childhood: Recollection and Revelation. I recount early experiences and then related them to contemporary issues and challenges in society).

https://www.amazon.com/author/truthtable

twitter: JCharlesThomas@truthtableJCT